The vision: multiple coding agents, working on independent tasks at once, all merging to a single PR. No waiting. No sequential bottlenecks.

Here's what nobody tells you: you can't actually run parallel AI coding agents without a plan that's been decomposed for parallel execution. If your subtasks share state, touch the same files, or lack clear scope boundaries, your agents will collide. The bottleneck isn't agent speed. It's the spec.

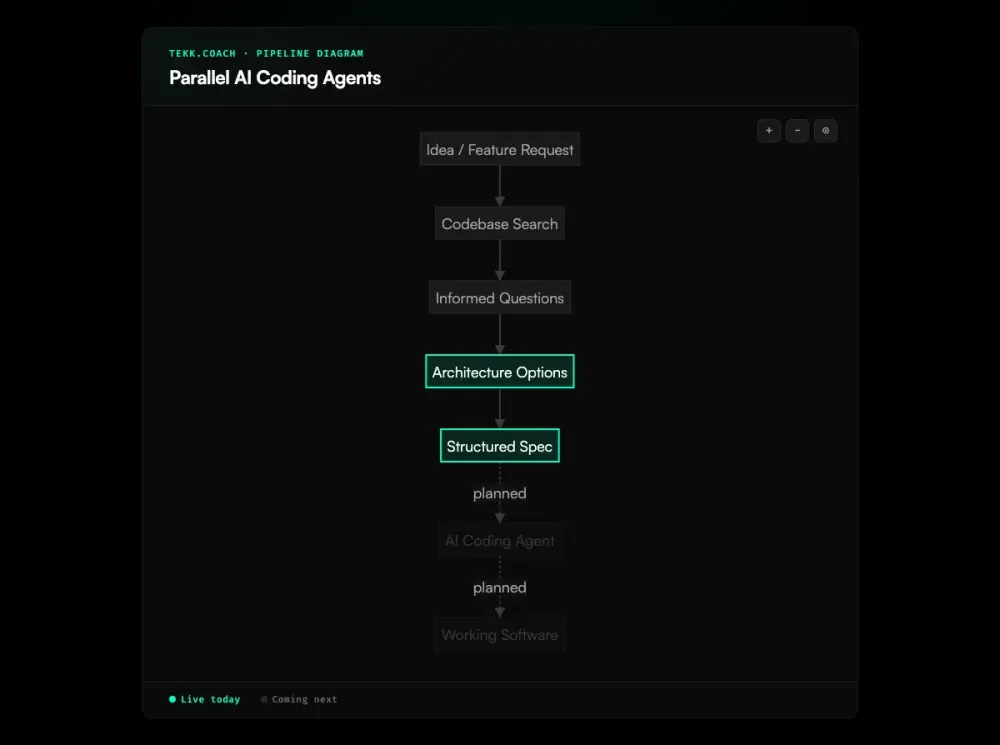

Tekk solves the spec problem today. It reads your codebase, breaks your feature into dependency-ordered subtasks, and maps which ones can run in parallel. The parallel execution plan is ready. The dispatch layer — sending those tasks to Cursor, Codex, and Claude Code simultaneously — is coming next.

[Try Tekk.coach Free →]

How Tekk handles parallel AI coding agents

Part A — Live today: the parallel execution plan

Before any agent runs in parallel, someone has to figure out what "in parallel" actually means for a given feature. Which subtasks are truly independent? Which ones share a database table, an API route, a shared component? Which ones must finish before others can start?

This is the decomposition problem. It's where most parallel agent workflows break down. Developers spin up Cursor's multi-agent mode or fire off several Claude Code instances and then realize the task boundaries were never thought through. Conflicts, duplicate work, and incoherent PRs follow.

Tekk reads your codebase before generating anything — semantic search, file search, directory analysis against your actual repo on GitHub, GitLab, or Bitbucket. It finds the files each subtask will touch. It maps ordering dependencies. The plan it produces groups subtasks into parallel execution waves: these three tasks can run at the same time, this one waits for them. Each task has acceptance criteria and file references. That's the spec your agents need. This is spec driven development applied to multi-agent execution — the spec is what makes parallelism safe.

This is live today.

Part B — Coming next: parallel agent dispatch

Once you approve the plan, Tekk will dispatch the independent subtasks to your coding agents simultaneously. Cursor, Codex, and Claude Code connect via OAuth — same flow as connecting GitHub today. Each parallel wave fires off independently. Agents push to a shared feature branch. Progress tracks on a kanban board in real time. When the wave completes, you get one PR to review.

We're being direct about the timeline because the planning layer is genuinely useful right now. You can use it today with whatever agents you already have. The automated dispatch is what we're building next.

Key benefits

Subtask decomposition by dependency (live) Tekk maps which subtasks are independent and which have ordering constraints before you run a single agent. Agents that share scope or files get serialized; agents with clean independence get parallelized.

Parallel execution wave mapping (live) Every Tekk plan groups independent subtasks into execution waves. You see exactly which tasks can run simultaneously. Ready to hand to any coding agent today.

Works with agents you already have (coming next) Cursor, Codex, Claude Code. You're already paying for them. Tekk connects via OAuth and coordinates them — you don't switch tools, you add an orchestration layer.

Single PR output (coming next) All parallel agent work merges to one shared feature branch. One review cycle. Not the branching chaos that comes from ad-hoc parallel execution.

How it works

Step 1: Connect your repo Link your GitHub, GitLab, or Bitbucket repository. Tekk reads the codebase — languages, frameworks, file structure, patterns. Every step that follows is grounded in your actual code.

Step 2: Describe the feature Tell Tekk what you're building. The agent asks 3–6 questions grounded in what it found in your repo. Not generic planning questions — ones specific to your stack.

Step 3: Run a team of coding agents with the generated plan Tekk produces a spec with subtasks ordered by dependency, parallel execution waves mapped out, file references included, and acceptance criteria per task. Approve it, then hand it to your agents — or wait for dispatch to do it automatically.

Step 4: Dispatch (coming next) Tekk fires independent subtasks to your agents in parallel via OAuth. Each agent works in an isolated branch. Progress tracks live on the kanban board.

Step 5: Review one PR All agent work merges to a shared feature branch. One pull request to review. Merge when ready.

Who this is for

Developers trying to parallelize AI coding You've run multiple Claude Code or Cursor instances manually. You know the potential. You've also hit the coordination problems — merge conflicts, overlapping scope, agents making incompatible choices. Tekk's decomposition gives you the foundation to actually parallelize without the chaos.

Teams managing multiple agents If you're coordinating Cursor, Codex, or Claude Code across a team, you know how much time goes into figuring out who (or what) works on what. Tekk's codebase-grounded planning makes those decisions automatic and explicit. Teams ready to go further can explore ai agent orchestration for a full view of how dependency-mapped dispatch works at scale.

Anyone who has tried Conductor and wants a plan-first approach Conductor and Vibe Kanban are good at managing parallel agent execution. They assume you already have well-specified, independent tasks. Tekk generates those tasks. If you're hitting the decomposition bottleneck — agents conflicting, scope creeping, PRs that don't cohere — that's the problem Tekk solves.

What are parallel AI coding agents?

Parallel AI coding agents means running multiple coding agents — Claude Code, Cursor, Codex — simultaneously on independent subtasks of the same codebase, then merging their work back to a shared branch. Instead of waiting for one agent to finish before starting the next, you dispatch both at once.

The technical setup is well understood: git worktrees give each agent an isolated filesystem copy of the repo, branched from main. Agents can't overwrite each other's work because they're in separate directories. Merge happens after each agent completes, which avoids accumulating conflicts.

The time math is simple. Two 10-minute tasks run sequentially cost 20 minutes. Run them in parallel: 10 minutes. At the scale of a quarterly roadmap with dozens of independent subtasks, that compression adds up. Teams running parallel agent workflows have reported shipping quarterly roadmaps in 3–4 weeks. Structured ai project planning is what keeps that roadmap coherent when multiple agents are executing simultaneously.

What separates the workflows that succeed from those that don't is task decomposition — whether the tasks given to agents are genuinely independent. In the current multi-agent coding platform landscape, tools cover different layers: Cursor (up to 8 parallel agents) and Claude Code (Agent Teams) handle execution. Vibe Kanban and Conductor handle workflow management. Tekk addresses the planning layer — codebase-grounded decomposition that makes safe parallel execution possible.

Frequently asked questions

What are parallel AI coding agents?

Parallel AI coding agents are multiple AI coding assistants — Claude Code, Cursor, Codex — working simultaneously on different, independent subtasks of the same codebase. Each agent runs in an isolated branch (typically via git worktrees) so they don't overwrite each other's work. Results merge to a shared feature branch when each agent completes. The goal is to compress build time by running independent tasks concurrently rather than one after another.

How do you run a team of coding agents without creating merge conflicts?

Each agent needs its own isolated branch — git worktrees are standard. More importantly, the tasks themselves have to be genuinely independent: no shared files, no shared state, no ordering dependencies. When tasks overlap, you get conflicts regardless of the isolation layer. This is why decomposition quality determines whether a parallel agent workflow succeeds. The isolation is easy. The decomposition is the hard part.

What is the best tool for Claude Code parallel agents?

Claude Code supports parallel agents natively through Agent Teams — multiple Claude instances coordinating on a shared codebase. For generating the plan before dispatch, Tekk produces codebase-grounded specs with dependency-ordered subtasks mapped into parallel execution waves, ready for Claude Code to execute. For managing the execution workflow, Vibe Kanban and Conductor are the tools most developers reach for. They serve different layers: Tekk handles planning, Claude Code handles execution, Vibe Kanban handles coordination.

How does Tekk decompose tasks for parallel coding agents?

Tekk reads your actual repo before generating any plan — semantic search, file search, directory analysis. The agent asks questions grounded in your code, then produces a spec with subtasks ordered by dependency and grouped into parallel execution waves. Each subtask includes file references, acceptance criteria, and explicit dependency markers. You see exactly which tasks can run simultaneously and which must wait — the prerequisite for safe parallel dispatch.

What is a multi-agent coding platform?

A multi-agent coding platform coordinates multiple AI coding agents on the same project, handling task allocation, agent dispatch, progress tracking, and merge management. The space has split into layers: planning (Tekk — decompose the spec into independent tasks), execution (Cursor, Claude Code, Codex — write the code), and management (Vibe Kanban, Conductor — coordinate the workflow). The tools converging toward handling the full stack from decomposition to PR are what most teams are watching.

What are parallel coding agents and how do they work?

Parallel coding agents are AI models capable of autonomous code execution — like Cursor Agents, Claude Code, or OpenAI Codex — running at the same time on separate tasks within the same repo. Each agent gets an isolated working copy, receives a scoped task with clear inputs and expected outputs, runs without human intervention mid-task, and pushes its changes to a branch. A developer or orchestrator reviews and merges. It's similar to managing a small team: you define the tasks clearly, hand them off, and review the results.

Is Tekk's parallel agent dispatch available now?

Not yet, and we want to be clear about that. The parallel execution plan — decomposed subtasks with dependency ordering and parallel wave mapping — is live today. The dispatch layer — sending those tasks to Cursor, Codex, and Claude Code simultaneously via OAuth, with real-time kanban tracking and single-PR output — is what we're building next. The planning value is real and usable right now. The automated dispatch is coming.

Start planning free

The bottleneck on parallel AI coding agents isn't the agents. It's the plan. Connect your repo, describe what you're building, get a spec with subtasks already decomposed for parallel execution.

When the dispatch layer ships, your plan is ready. Until then, your agents are waiting for better specs.

[Start Planning Free →]