Run multiple AI coding agents on the same codebase. All merging to one PR.

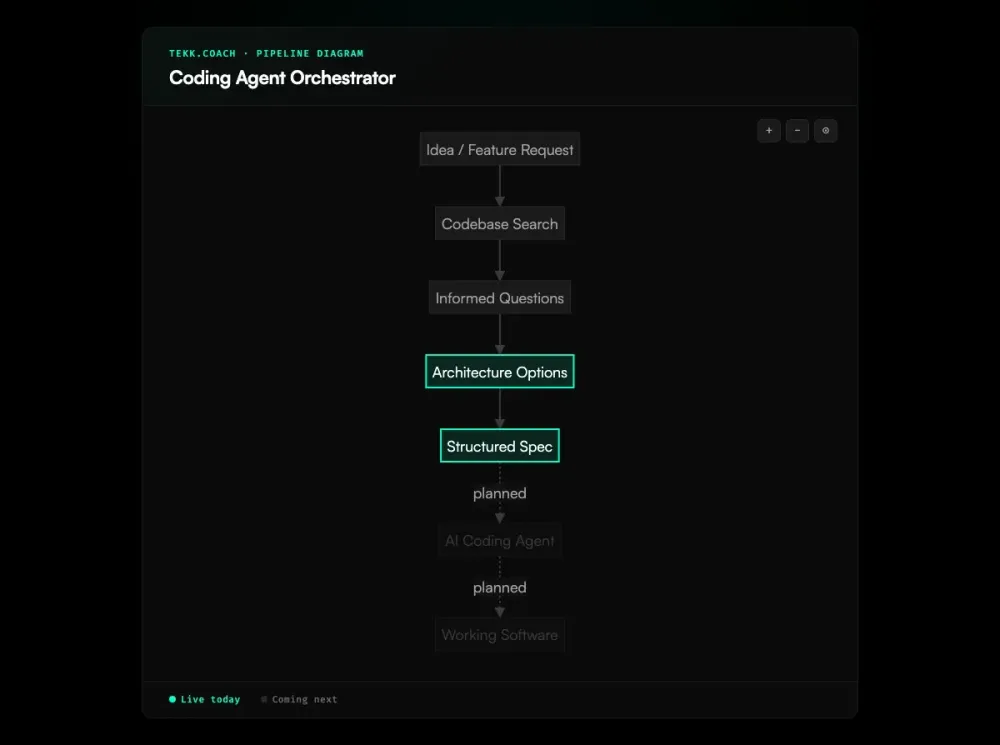

That's where this is going. Here's what's real today, and why the planning layer is the thing most people skip.

Today: Tekk generates the spec that makes multi-agent execution work. Every plan comes out with subtasks decomposed by dependency, file references baked in, scope defined. The spec is already an orchestration plan — before the orchestration layer even ships.

Coming next: Tekk dispatches those subtasks to Cursor, Codex, Claude Code, and Gemini via OAuth. Parallel execution waves. One shared feature branch. One PR at the end. Real-time kanban tracking while each agent does its job.

How Tekk.coach does AI agent orchestration

Part A: what's live today

Every feature you plan in Tekk comes out as a structured spec. Not a chat message. Not a paragraph you paste into Cursor and hope for the best. This is spec driven development in practice — the spec is the primary artifact, not a byproduct of a chat session.

The agent reads your codebase before asking a single question. It searches your files, maps your dependencies, understands your architecture. Then it writes a plan with:

- Subtasks ordered by dependency — the execution sequence is built in

- File references per subtask — each agent knows exactly which files to touch

- Acceptance criteria per subtask — behavioral definitions, not vague descriptions

- A "Not Building" section — a hard scope boundary so agents don't wander

That structure isn't accidental. It's designed so a coding orchestrator can read the plan and dispatch correctly, without ambiguity. The spec is the orchestration plan.

You can use these specs with your agents right now. Connect your repo, generate a plan, hand it to Cursor or Claude Code. The output quality difference is immediate.

Part B: what's coming next

Tekk will close the loop on execution.

When you approve a plan, Tekk takes over:

- Analyzes the subtask dependency graph

- Groups independent subtasks into parallel execution waves

- Batches related subtasks (shared files, shared context) into coherent jobs per agent

- Dispatches to Cursor, Codex, Claude Code, or Gemini via OAuth

- All agents push to a single shared feature branch

- One PR when the last job completes

- Real-time progress on your kanban board — 3 of 5 jobs done

You watch it ship instead of driving it yourself.

Key benefits

Tekk's plans map subtask dependencies automatically. You know which tasks can run in parallel before you dispatch a single agent. No manual decomposition, no dependency spreadsheets. (Live today.)

Independent subtasks get flagged. Dependent subtasks get sequenced. The plan tells you what can run simultaneously and what has to wait — your agents don't step on each other. (Live today.)

All agents push to the same feature branch. One PR to review. No branch juggling, no manual merges across four separate worktrees. (Coming next.)

Your kanban shows what's planned, in progress, and done across all your features today. When the execution layer ships, each card will show live agent progress: which jobs are running, which finished, which need your attention. (Live for planning. Coming next for execution.)

How it works

Step 1 — Connect your repo. GitHub, GitLab, or Bitbucket. Takes two minutes.

Step 2 — Describe the feature. One sentence or a paragraph. Tekk asks clarifying questions grounded in what it found in your code — not the generic questions you'd get from a blank chat window.

Step 3 — Review options (when there's a real architectural choice) Two or three approaches with honest tradeoffs. Pick one, or skip it if the path is obvious.

Step 4 — Get the spec (live today) A complete, structured plan streams into the editor in real time. Subtasks with dependencies, file references, acceptance criteria, scope boundaries. This is the document your team works from.

Step 5 — Edit and approve. The spec is a living document. Change anything before you proceed.

Step 6 — Execute (coming next) Tekk dispatches to your agents via OAuth. Parallel waves run. You watch the kanban.

Step 7 — Review and merge (coming next) One PR. Review it on GitHub. Merge it. The card auto-completes.

Who this is for

Developers already running Cursor and Claude Code on the same project, burning mental energy on context management and merge conflicts — Tekk's planning layer cuts that overhead today. The orchestration layer eliminates it.

Teams who can see the parallelism opportunity in their work but can't easily act on it. Tekk's dependency mapping makes it concrete. You know what can run simultaneously before you start.

Solo founders who want to run a team of agents. One person with four agents in parallel is a real engineering team. The bottleneck is planning and coordination, not the agents themselves. That's what Tekk removes. Structured ai project planning before any agent runs is what makes that coordination possible.

Anyone whose agents keep building the wrong thing. Most coding agent failures trace back to a vague spec. If you've typed "add authentication to my app" into Cursor and gotten output that didn't fit your architecture, that's not an agent problem. It's a spec problem. Tekk fixes it at the source.

What is AI agent orchestration?

In software development, AI agent orchestration means coordinating multiple coding agents so they work in parallel on the same codebase without overwriting each other, and merging their output into one result.

A single coding agent — Cursor, Claude Code, Codex — is sequential by default. You give it a task, it runs, you wait, you review, you give it the next one. Orchestration breaks that bottleneck. Independent subtasks run simultaneously. A feature that would take one agent an hour might take four agents fifteen minutes.

The constraint in AI-assisted development has shifted. It's no longer how fast an agent writes code. It's whether the task decomposition going into that agent is any good. Most orchestration failures come down to a bad input spec — vague subtasks, unmapped dependencies, missing file context. Effective ai agent orchestration starts with a plan that's already decomposed by dependency before the first agent runs. Google's 2025 DORA Report found that higher AI adoption correlated with a 9% increase in bug rates and 154% increase in PR size. Agents aren't the problem. The specs they run on are.

The current landscape includes developer frameworks — LangGraph, CrewAI, the OpenAI Agents SDK — for building orchestration systems from scratch, and coding-specific tools like Composio's Agent Orchestrator for managing agent fleets. Most of them share the same assumption: you arrive with a well-decomposed task structure. That assumption is the gap.

Tekk attacks it differently. The spec Tekk generates — subtasks by dependency, file references baked in, scope defined hard — is exactly what a coding orchestrator needs to dispatch correctly. Tekk produces that spec from your actual codebase. No other tool in this space does that.

FAQ

What is AI agent orchestration? AI agent orchestration is coordinating multiple AI agents so they work together on a complex task. An orchestrator breaks the task into subtasks, assigns them to appropriate agents, runs independent subtasks in parallel, manages dependencies, and combines the results. In software development, this usually means dispatching coding tasks to agents like Cursor or Codex and merging their output into one pull request.

What is a coding agent orchestrator? A coding agent orchestrator sits above your coding agents — Cursor, Codex, Claude Code — and manages how work gets distributed across them. It decides which subtasks can run in parallel, which have to wait, which agent handles which job, and how the results get merged. The orchestrator handles the coordination so you don't have to do it manually.

How does Tekk.coach handle AI agent orchestration for coding? Tekk works in two layers. Today, Tekk generates specs with subtasks decomposed by dependency, file references included, and clear scope boundaries — exactly what a coding orchestrator needs as input. Coming next, Tekk closes the loop: analyzes the approved spec, groups subtasks into parallel execution waves, dispatches to Cursor, Codex, Claude Code, and Gemini via OAuth, and delivers one PR at the end.

Is Tekk's multi-agent orchestration available today? The dispatch layer is not live yet. What's live is the planning layer: codebase-aware spec generation, dependency-mapped subtasks, kanban management, and review mode. These capabilities are what make orchestration work once dispatch ships. If you want to use Tekk's specs with your agents today, you can — connect your repo, generate a plan, and hand the structured spec to Cursor or Claude Code directly.

How does Tekk compare to Conductor for AI agent orchestration? Conductor and similar tools handle execution and dispatch — routing tasks to agents, managing agent fleets, dealing with CI failures and merge conflicts. They assume you arrive with a task structure already defined. Tekk generates that structure from your codebase before dispatch happens. The two approaches fit together: Tekk owns the planning layer, and Tekk's coming execution layer will handle dispatch the same way Conductor does — with a codebase-grounded spec as the starting point rather than a paragraph of instructions.

What agents does Tekk support for orchestration? Cursor (via Cloud Agents API, webhook-driven), Codex (OpenAI's cloud coding agent), Claude Code (extending the Tekk agent SDK with write-capable MCP tools), and Gemini (design-first tasks, then handoff to Cursor or Codex). These are the agents in the coming execution layer. Today, Tekk's plans work with any agent you run manually — the structured spec improves output regardless of which one you're using.

What is AI agent orchestration for coding and how does it work? AI agent orchestration for coding breaks a development task into subtasks, determines which can run in parallel and which have to run in sequence, dispatches those subtasks to specialized coding agents, and merges the output into one pull request. The hard part is not the dispatch. It's the task decomposition and dependency mapping before dispatch. That's a planning problem — and it's what Tekk solves.

Start building

The orchestration dispatch layer is coming. The planning layer — codebase-aware specs with dependency mapping built in — is live right now.

Connect your repo. Describe what you're building. Get a spec that's ready for agents.