Writing specs manually is slow. Skipping specs causes rework. Prompting a chat AI to generate them produces output that doesn't know your codebase, your stack, or your constraints — and your coding agents flail against it just as much as against a vague paragraph.

Tekk.coach automates spec generation differently: the agent reads your actual codebase before writing a single word. Every question, every architectural option, every subtask in the output references your real files, patterns, and dependencies. The spec you get is grounded, complete, and executable — not a formatted template with your feature name swapped in.

[Try Tekk.coach Free →]

Key Benefits

Grounded in your codebase, not a generic template. Tekk reads your repo before generating anything. The spec that comes out references actual files, patterns, and constraints. Your coding agents execute correctly because they receive precise, specific instructions — not a templated document with your feature name in the title.

Scope is enforced by default. Every spec includes an explicit "Not Building" section. This is not optional and it's not a text field you fill in manually — it's part of the automated output. Scope creep starts where the spec ends. Tekk closes that gap structurally.

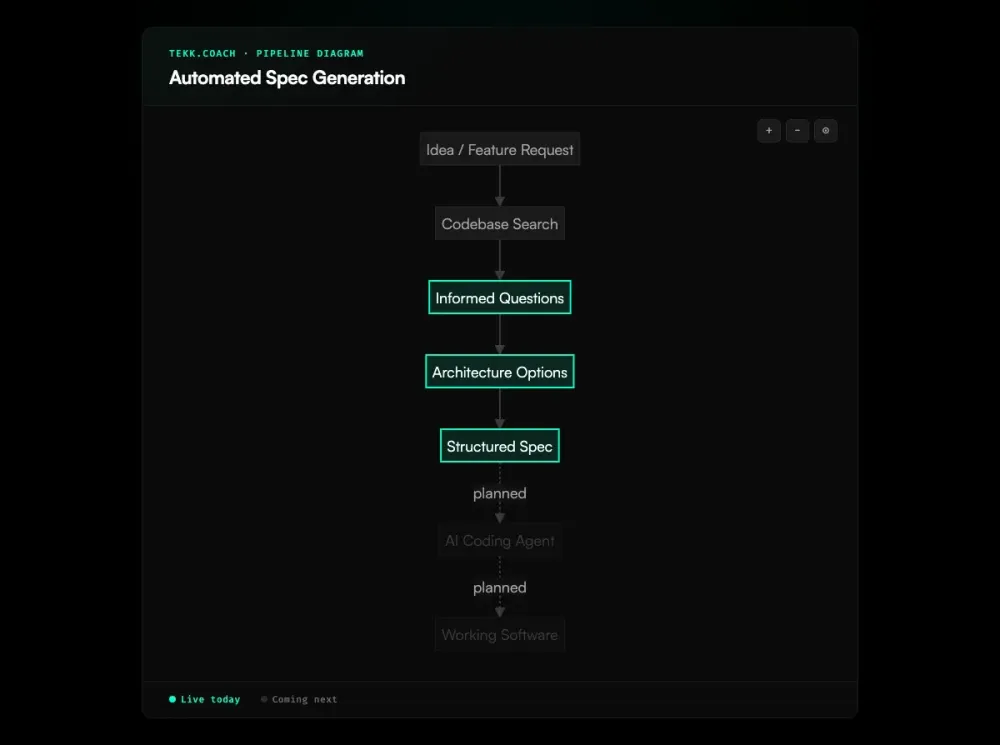

Multi-turn, not one-shot. The best specs come from informed questions, not a single prompt. GitHub's spec-driven development toolkit validates this pattern: specs work as a source of truth that agents use to generate, test, and validate code. Tekk's Search → Questions → Options → Plan workflow ensures the spec reflects your actual system constraints and your actual decisions — not the AI's best guess at both.

An editable document, not a chat message. The spec lives in your task editor, not in a chat thread. It's persistent, editable by you and your team, and linked to your kanban card. Close the chat, reopen the task — your spec is still there.

Your coding agents can execute it. The spec format (behavioral subtasks with acceptance criteria and file references) is specifically designed for AI coding agents. Research from Augment Code on AI coding agent performance shows that AI models achieve only 19.36% Pass@1 on multi-file tasks compared to 87.2% on single-function benchmarks — the spec is what closes that gap. Better inputs → better outputs. The spec is the moat.

How It Works

Step 1: Connect your repository. GitHub, GitLab, or Bitbucket. Tekk's agent indexes it with semantic search so every spec is grounded in your actual code, not generic patterns.

Step 2: Describe what you're building. Plain language. No special format required. "Add magic link auth," "Build a CSV export endpoint," "Refactor the payment service to support multi-currency." The agent handles the rest.

Step 3: Answer 3-6 questions. Questions are specific to what the agent found in your code. Not templates. You're answering questions about your actual architectural constraints — and you get a spec that reflects your actual decisions.

Step 4: Review architectural options (when applicable). When there are genuinely different approaches, the agent presents 2-3 options with honest tradeoffs. You choose the direction. When there's an obvious path, this step is skipped.

Step 5: Review your spec. The complete spec streams into the BlockNote editor in real-time. TL;DR, Building/Not Building scope, subtasks with acceptance criteria and file references, dependency ordering, assumptions with risk levels, validation scenarios. Edit anything. Then execute.

Who This Is For

Founders and solo builders shipping with AI coding agents. You don't have time to write 20-page PRDs and you shouldn't need to. When a full product requirements document is needed before feature-level specs, the ai prd generator covers that upstream step. Tekk generates a precise, executable spec from a plain language description in under 10 minutes.

Product managers and technical PMs who need specs that are grounded in system reality, not organizational narrative. If your specs keep getting revised during implementation because the engineer found architectural conflicts the spec didn't account for — Tekk's codebase-first approach fixes this.

Developers using Cursor, Codex, or Claude Code who've learned that the agent is only as good as what you give it. As Addy Osmani writes in How to Write a Good Spec for AI Agents, small focused context beats one giant prompt — and spec quality is the ceiling on agent output quality. Tekk is the tool that produces the inputs your agents need.

Not for teams that want spec templates to fill in manually. Not for enterprises that need spec governance workflows. Tekk automates spec generation — it doesn't provide a spec management system.

What Is Automated Spec Generation?

Automated spec generation refers to AI systems that produce structured software specifications from natural language input, reducing or eliminating manual spec-writing effort. Academic research on LLM-based requirements generation confirms that large language models can meaningfully enhance the efficiency of requirements engineering. The output of a spec generator is not a narrative document — it's a structured artifact with acceptance criteria, scope boundaries, subtask sequencing, and file-level guidance that a coding agent can execute against.

The term covers a wide capability range. At the low end: AI reformats your description as bullet points. At the high end: AI reads your codebase, conducts an informed planning dialogue, presents architectural options, and produces a complete spec with traceable acceptance criteria and explicit scope enforcement. The difference in output quality — and downstream coding agent performance — is significant.

The demand for automated spec generation has risen alongside AI coding agents — over 15 major spec-driven development platforms launched between 2024 and 2025, and the AI code generation market is projected to grow from $4.91 billion in 2024 to $30.1 billion by 2032. As developers hand more implementation work to autonomous agents, the spec quality ceiling has become the performance ceiling. Agents executing against vague prompts produce vague code. The discipline of spec-driven development — write a precise spec, then execute — is being automated by tools like Tekk.coach because it's both high-value and previously high-effort.

Frequently Asked Questions

What is automated spec generation?

Automated spec generation is the use of AI to produce structured software specifications from natural language descriptions, typically including: acceptance criteria per subtask, explicit scope boundaries, architectural guidance, and dependency ordering. The goal is to reduce manual spec-writing effort while improving spec quality — particularly the quality of inputs given to AI coding agents.

How does AI generate a software spec?

The best AI spec generation is codebase-first: the AI reads your repository before generating anything, then conducts a multi-turn planning dialogue to surface architectural constraints and decisions, then produces a complete structured spec. Lower-quality tools take a one-shot approach: you describe the feature, AI reformats it as a spec. The difference is whether the output is grounded in your actual system.

What does an auto-generated spec include?

A Tekk.coach spec includes: a TL;DR summary (what we're building and why), a Building section (explicit in-scope requirements), a Not Building section (explicit out-of-scope — this is the scope protection), subtasks with acceptance criteria, file references, and dependency links, assumptions with risk levels and consequences if wrong, and validation scenarios. This structure is designed for execution by AI coding agents — and for teams running multiple agents, ai agent orchestration handles decomposing the spec into parallel execution waves.

How is automated spec generation different from asking ChatGPT to write a spec?

ChatGPT doesn't know your codebase. It generates a spec for a generic version of your feature type, not a spec grounded in your actual system. Tekk reads your repository before generating anything — the spec that comes out references your actual files, frameworks, and patterns. Additionally, ChatGPT output is a chat message. Tekk output is a persistent, editable document linked to your kanban task.

Can automated spec generation work with existing codebases?

Yes — and it works better with an existing codebase than with a greenfield project. Tekk's codebase reading is what makes the spec grounded. It finds your existing patterns, frameworks, services, and dependencies before asking a single question. The spec reflects your actual architecture, not a theoretical architecture for your feature type.

What's the difference between automated spec generation and a PRD?

A PRD (Product Requirements Document) is typically narrative: it explains the feature for stakeholders, describes business value, and sets context. A spec is engineering-precise: it defines what to build, what not to build, how to verify it, and in what order to build it. Tekk generates specs — structured artifacts designed for execution, not for alignment meetings. If you need a stakeholder narrative, that's a different document.

How do I get started with automated spec generation in Tekk.coach?

Connect your GitHub, GitLab, or Bitbucket repository, describe what you're building in plain language, and work through the 3-6 questions the agent asks based on your code. The spec generates automatically. No templates to fill in, no special formatting required.

Ready to Try Tekk.coach?

Your coding agent needs a spec, not a paragraph. Connect your repo and get a codebase-grounded specification in minutes — complete with scope boundaries, acceptance criteria, and everything your agents need to execute correctly.

[Start Planning Free →]