Your coding agent is only as good as its prompt. You've probably already learned this the hard way: vague description, vague result, rework. The promise of spec driven development is that you let AI generate the spec — grounded in your actual codebase — so your agent executes correctly the first time.

Tekk.coach is where that happens. Connect your repo, describe the feature, and the agent reads your code before asking a single question. What comes out is a structured, editable spec your coding agent can execute — not a chat message, not a template, not a generic plan.

[Try Tekk.coach Free →]

Key Benefits

Specs grounded in your actual code The agent reads your repository before writing a single line. Output references real files, real patterns, real dependencies — not boilerplate that contradicts your existing architecture.

Explicit scope protection Every plan has a "Not Building" section. You know exactly what's in and what's out before your coding agent starts. Scope creep is defined away, not managed after the fact.

Living document, not a static file The spec streams into a BlockNote editor as the working document. Edit it, refine it, link it to a kanban card. It doesn't drift into irrelevance because it's the document you're executing from.

Works with any coding agent Tekk doesn't replace Cursor, Codex, or Claude Code. It makes them dramatically more effective by giving them the spec they need to do the right thing. Bring your existing agents; Tekk handles the planning layer.

Multi-turn planning with persistent context Sessions persist across turns. The agent builds context over the conversation — clarifying questions, architectural options, trade-off discussions — rather than starting fresh on every message.

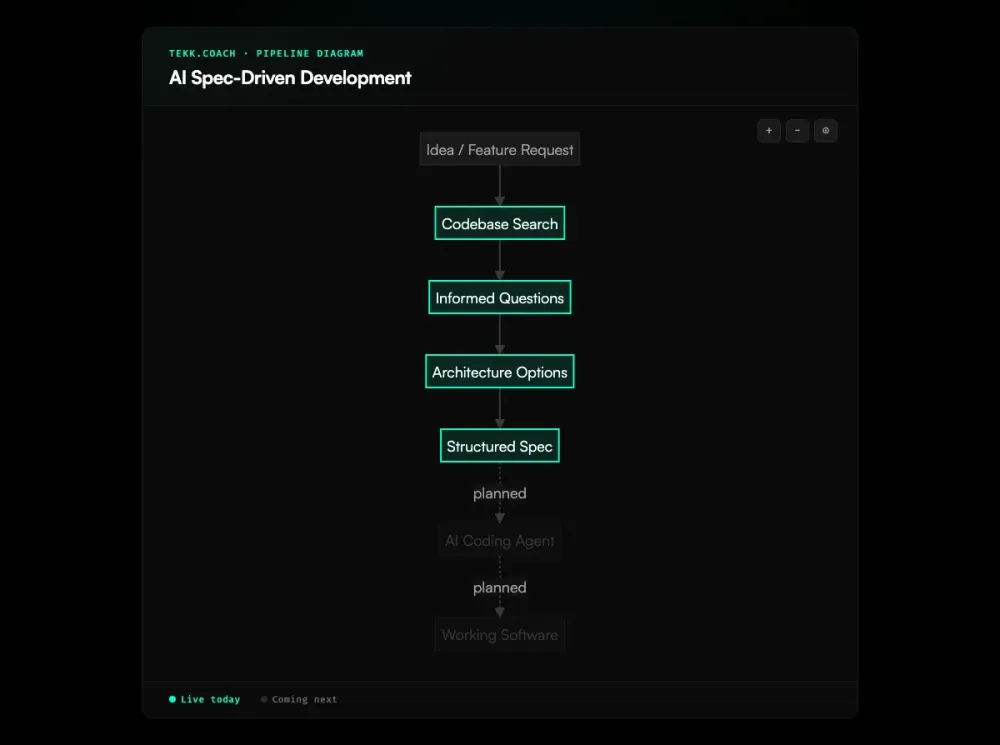

How It Works

Step 1: Connect your repo Link your GitHub, GitLab, or Bitbucket repository. Tekk indexes the codebase: languages, frameworks, services, packages. This takes a few minutes the first time.

Step 2: Describe the feature Open a new task and describe what you want to build — as much or as little detail as you have. The agent doesn't need a spec to create a spec. A paragraph is enough to start.

Step 3: Agent reads the codebase Before asking anything, the agent searches your repository. It identifies the relevant files, existing patterns, and architectural constraints that will affect this feature. No generic questions — only questions grounded in what it found.

Step 4: Options and questions The agent asks 3–6 clarifying questions, then presents 2–3 architecturally distinct approaches with honest tradeoffs. You pick the direction.

Step 5: Spec generated The complete spec streams into the task editor: TL;DR, Building / Not Building scope, subtasks with acceptance criteria and file references, assumptions, validation scenarios. Review it, edit it if needed, then hand it to your coding agent.

Who This Is For

Founders and solo builders working with coding agents who've hit the rework wall. You know what you want to build. You're tired of pasting context into chat windows and getting half-right implementations. You want the first run to work.

Small engineering teams (1–10 people) without dedicated architects. When you're building a data pipeline, adding authentication, or refactoring a service, you don't always have someone who knows the right architecture. Tekk fills that gap with specs grounded in your actual code and current best practices.

Product managers who need technically grounded specs, not templates. Tekk produces the kind of specification that a developer can actually execute — with file references, acceptance criteria, and scope boundaries — without requiring the PM to know every implementation detail.

Developers using Cursor, Claude Code, or Codex who are feeling the chaos. Specs in markdown files. Context in chat threads. Rework on features that looked right until they weren't. Tekk is one workspace where the planning, the spec, and the task management connect.

What Is AI Spec-Driven Development?

Spec-driven development is a methodology where a specification — defining what software should do, its constraints, and its acceptance criteria — is written before any code. The spec becomes the source of truth. Code is derived from it, not the other way around.

The "AI" qualifier changes who writes the spec. In traditional spec-driven development, a human author writes the specification document. In AI spec-driven development, an AI agent generates the spec — from a developer's description, from codebase context, from external research. The developer reviews and approves, but doesn't author from scratch.

This matters because writing a good spec is hard. Identifying edge cases, defining scope boundaries, grounding requirements in the actual codebase — these require expertise and time that most developers don't have on tap for every feature. AI spec generation removes that bottleneck.

The field emerged from a well-documented quality gap: AI coding agents produce significantly better output when given structured specifications vs. informal prompts. Studies cite 50–80% implementation time savings for well-specified features and error reductions up to 50%. By early 2026, over 30 frameworks and tools have organized around this insight. Thoughtworks listed spec-driven development in its Technology Radar as one of the most important practices to emerge from the AI coding wave.

Frequently Asked Questions

What is AI spec-driven development?

AI spec-driven development is a workflow where an AI agent generates a structured specification before any code is written. The spec defines requirements, scope, acceptance criteria, and implementation approach — grounded in the developer's intent and the actual codebase. The coding agent then executes against that spec rather than an informal description.

How is AI spec-driven development different from just prompting a coding agent?

When you prompt a coding agent directly, output quality depends entirely on prompt quality. Most developers don't write great prompts — they describe what they want at a high level and let the agent fill in the gaps. AI spec-driven development inverts this: the AI generates a complete, structured plan first, you review and approve it, then the coding agent executes. The extra step produces dramatically less rework.

How does AI spec-driven development work with Cursor?

Tekk.coach generates the structured spec — including file references, acceptance criteria, subtasks, and scope boundaries. You copy or export that spec and use it as the prompt for Cursor's agent or composer. For running multiple agents in parallel against the same spec, Tekk's ai agent orchestration layer handles the decomposition and dispatch. Cursor then has a precise, codebase-grounded instruction set rather than a paragraph of intent. The result is fewer back-and-forth cycles and less scope drift.

How does AI spec-driven development work with Claude Code?

The same principle applies. Tekk generates the spec based on your codebase; Claude Code executes it. Tekk's multi-agent orchestration layer (coming next) will dispatch directly to Claude Code via MCP integration, with approved subtasks batched into coherent jobs.

What makes a good AI-generated spec?

A good AI-generated spec is codebase-grounded (references real files and patterns), explicitly scoped (defines what's not being built as well as what is), decomposed into testable subtasks with acceptance criteria, and aware of assumptions and their risk levels. Generic specs — produced without reading the codebase — look complete but produce poor implementations because they don't account for the actual architecture.

Is AI spec-driven development worth it for small features?

For simple, well-understood tasks — bug fixes, small UI changes, one-line modifications — the overhead isn't worth it. AI spec-driven development pays off for complex features, cross-cutting changes, or work in unfamiliar domains. The skill is knowing which is which.

What tools exist for AI spec-driven development in 2026?

The main tools split into two categories: living-spec platforms (Tekk.coach, Augment Code/Intent) that keep specs connected to execution; and static-spec + IDE tools (Kiro, GitHub Spec Kit, OpenSpec, BMAD-METHOD) that generate structured documents upfront but require manual reconciliation when specs drift from code.

Can AI spec-driven development replace manual spec writing?

For most features, yes — the AI generates a better spec faster than a developer would write one manually, because it reads the codebase and asks the right questions. For architectural decisions with strategic implications, human judgment should shape the spec. The agent generates; you review and approve.

Ready to Try Tekk.coach?

If your coding agents are producing half-right implementations, the fix isn't better prompts — it's a better spec. Connect your repo, describe the feature, and get a structured plan grounded in your actual codebase in minutes.

Your agents ship correctly when they know exactly what to build.

[Start Planning Free →]