Your AI coding agents are only as precise as their inputs. You write a task description. The agent interprets it generously. The code that comes back is plausible, not correct — because the agent didn't have traceable acceptance criteria, explicit scope, or any awareness of how this feature touches your existing system. That's not an agent problem. That's a requirements problem. The Standish Group's CHAOS research has consistently identified clear requirements as a top-three success factor for software projects — and unclear requirements as the leading cause of failure.

Tekk.coach generates structured, codebase-grounded requirements before any code is written. The agent reads your repository, surfaces the architectural constraints you didn't know to ask about, and produces requirements with acceptance criteria per subtask — not narrative text, not formatted bullet points, but structured requirements your coding agents can actually execute against. This is the engineering-level output that makes spec driven development work at scale — every requirement traceable from intent to subtask to test case.

[Try Tekk.coach Free →]

Key Benefits

Requirements grounded in your actual system. Tekk reads your codebase before generating a single requirement. The output references your real files, frameworks, ORM patterns, and architectural constraints. Requirements that don't match your system cause rework — research shows that rework from errant requirements consumes 28% to 42% of a project's development costs. Requirements grounded in your system prevent it.

Acceptance criteria built in — for every subtask. Every subtask includes concrete, verifiable pass/fail criteria. Not "user is authenticated" — "user receives a 200 response and session cookie is set, magic link expires after 15 minutes, failed attempt returns 401 with standardized error payload." Missing acceptance criteria is where test coverage gaps and implementation disputes come from. Recent IEEE research on multi-modal acceptance criteria generation confirms that LLMs can produce verifiable criteria from requirements data — Tekk closes that gap automatically by building this into every subtask.

Explicit scope enforcement. The "Not Building" section is not optional text you fill in later. It's a required output of every planning session. What's out of scope is as important as what's in scope, and Tekk generates both.

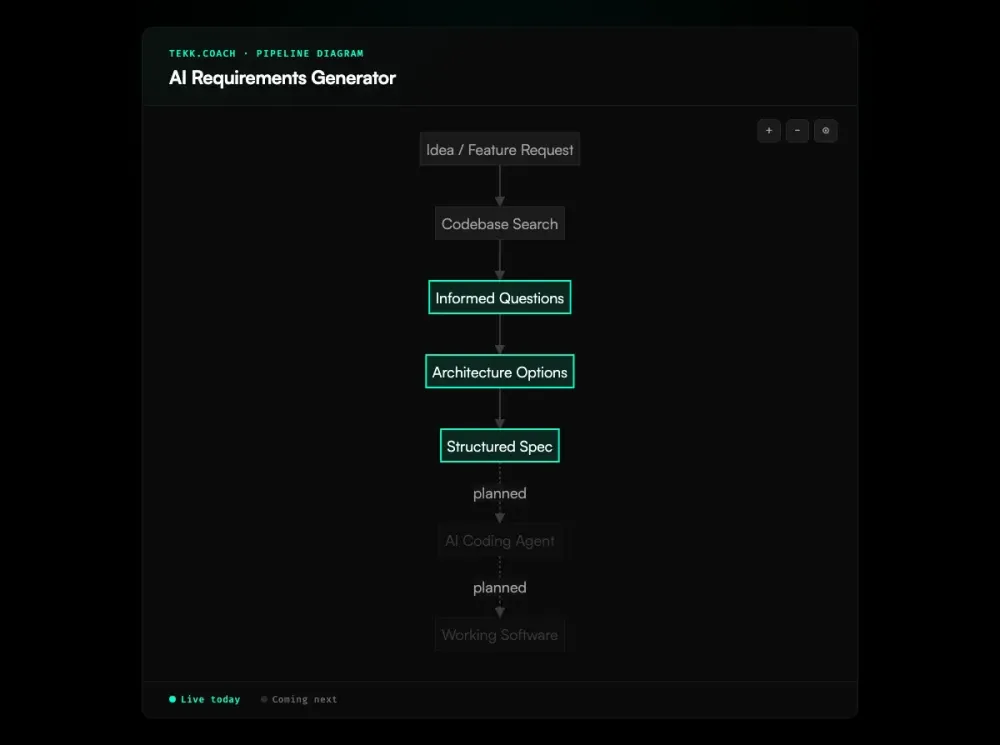

Multi-turn planning, not one-shot generation. Good requirements come from informed questions. Tekk's workflow is Search → Questions → Options → Plan. The agent reads your code, asks questions about actual constraints it found, and optionally presents architectural options with tradeoffs. The requirements reflect your decisions, not the AI's assumptions.

Requirements your coding agents can execute. The output format — behavioral subtasks with acceptance criteria and file references — is designed for AI coding agents. Cursor, Codex, and Claude Code perform significantly better against precise, structured requirements than against task descriptions. As real-world developer evaluations consistently show, agent output quality is bounded by input quality — structured requirements are the highest-leverage improvement. For teams running multiple agents against the same requirements, ai agent orchestration coordinates their execution so nothing conflicts.

How It Works

Step 1: Connect your codebase. GitHub, GitLab, or Bitbucket. Tekk's agent indexes your repository with semantic search so every requirement references real code.

Step 2: Describe what you need. Plain language. "Add multi-tenant support to the user model." "Build a webhook ingestion pipeline." "Implement RBAC on the API layer." The agent takes it from there.

Step 3: Answer architecture-grounded questions. The agent asks 3-6 questions based on what it found in your code. These questions surface constraints you may not have considered — existing patterns that constrain implementation choices, dependencies that affect ordering, assumptions that need to be made explicit.

Step 4: Review architectural options (when applicable). When multiple valid approaches exist, the agent presents 2-3 options with honest tradeoffs. You decide the direction before requirements are locked.

Step 5: Review and edit your requirements. The complete requirements output streams into the editor in real-time. Every subtask has acceptance criteria. Scope is explicit. Dependencies are ordered. Edit anything before execution begins.

Who This Is For

Engineers and technical leads who need requirements that are precise enough to produce deterministic code from an AI agent. If your team's AI-generated code keeps diverging from intent, the requirements are the problem. Industry data shows requirements errors cost 29x more to fix after deployment than during the requirements phase — precision up front is not optional.

Technical PMs building with AI coding agents who need requirements grounded in system architecture, not just user stories. You've written enough "As a user, I want..." stories that got misimplemented because the engineer (or the agent) couldn't infer the system constraints.

Founders and solo builders who understand what requirements are and don't want to write them manually for every feature. Connect your repo, describe the feature, answer 5 questions, and get production-quality requirements in under 10 minutes.

Not for teams that want narrative PRDs for stakeholder alignment. Not for product work that doesn't touch code. Tekk generates technical requirements for engineering execution — that's its scope, intentionally.

What Is an AI Requirements Generator?

An AI requirements generator uses language models to produce structured software requirements from natural language input. Unlike PRD generators — which produce narrative documentation for stakeholders — requirements generators produce engineering artifacts: acceptance criteria, functional requirements, dependency ordering, and scope boundaries that can be traced from requirement to implementation to test. If your team also needs the stakeholder-facing document, an ai prd generator produces that layer from the same codebase context.

The technical framing matters. "Requirements" implies traceability — each requirement maps to a subtask and a test case. It implies acceptance criteria — concrete, verifiable conditions that define when a requirement is satisfied. It implies scope management — explicit in/out-of-scope designation, not just a description of what's being built.

AI requirements generation became strategically important alongside the rise of AI coding agents. A 2026 systematic literature review found that generative AI applications in requirements engineering have grown exponentially — from 4 publications in 2022 to 113 in 2024 — with GPT-based models powering 67.3% of implementations. The quality ceiling for any coding agent is the quality of the requirements it receives. As developers hand more implementation work to Cursor, Codex, and Claude Code, the precision of requirements has become the primary variable in code quality. Tools that generate these requirements from codebase context — not just from natural language descriptions — produce measurably better agent outputs.

Frequently Asked Questions

What is an AI requirements generator?

An AI requirements generator is a tool that produces structured software requirements — including acceptance criteria, scope boundaries, dependency ordering, and subtask sequencing — from natural language input and, in better implementations, from actual codebase analysis. The output is an engineering artifact designed for implementation execution, not a narrative document for stakeholder review.

How does AI generate software requirements?

In Tekk.coach, requirements generation follows a four-phase workflow: Search (agent reads your codebase using semantic search, file search, and directory analysis), Questions (3-6 questions grounded in what the agent found), Options (optional: 2-3 architectural approaches with tradeoffs), Plan (complete structured requirements streamed into an editable document). This multi-turn approach produces requirements that reflect your actual system constraints — not a one-shot prompt's best guess at them.

What does AI-generated requirements output include?

Tekk's requirements output includes: a TL;DR summary, a Building section (explicit in-scope functional requirements), a Not Building section (explicit out-of-scope), subtasks with acceptance criteria, file references, and dependency links, assumptions with risk levels and consequences, and end-to-end validation scenarios. Every subtask has concrete, testable pass/fail criteria — not vague intent statements.

How is an AI requirements generator different from a PRD tool?

A PRD tool generates narrative documentation: feature descriptions, business context, user stories, stakeholder communication. A requirements generator produces structured engineering artifacts: acceptance criteria, dependency ordering, scope enforcement, traceable subtasks. These are different documents for different audiences. Tekk generates the latter — technical requirements for coding agent execution, not for alignment meetings.

Can an AI requirements generator read my existing codebase?

Tekk does this by design. The agent reads your repository before generating any requirement — using semantic search, regex lookup, file analysis, and repository profiling. Requirements generated without this context are aspirational; requirements generated from it are grounded. Tekk supports GitHub, GitLab, and Bitbucket.

What's the difference between requirements and acceptance criteria?

Requirements define what the system must do. Acceptance criteria define how you verify that it does it. Example requirement: "The system shall authenticate users via magic link." Acceptance criteria: "User receives email within 60 seconds. Magic link is single-use. Link expires after 15 minutes. Successful auth sets session cookie and redirects to dashboard. Expired link returns 401 with standardized error message." Good AI requirements generation produces both — Tekk generates acceptance criteria as part of every subtask, not as a separate step.

How do I get AI to generate precise, testable requirements?

The two factors that most improve AI requirements quality are: (1) codebase grounding — the AI reads your actual system before generating anything, so requirements don't conflict with existing architecture; (2) multi-turn dialogue — the AI asks informed questions before committing to a spec direction, surfacing constraints and decisions that one-shot generation misses. A 2025 practitioner survey found that over 90% of AI-for-RE implementations remain at early-stage development, with reproducibility and hallucinations cited as top barriers to trust — multi-turn, codebase-grounded workflows directly address both. Tekk.coach provides both. The alternative — prompting a general-purpose LLM to "generate requirements" from a description — produces template-quality output without either property.

Ready to Try Tekk.coach?

Stop giving your coding agents vague inputs and hoping for precise outputs. Connect your repo, answer 5 questions, and get structured requirements with acceptance criteria that your agents can actually execute against.

[Start Planning Free →]