You have Cursor. You have Claude Code. Maybe Codex. Each one can write code. None of them coordinate. You're the one deciding which agent gets which task, pasting context between tools, resolving the conflicts when two agents touched the same file. That's not a workflow — that's manual coordination with extra steps.

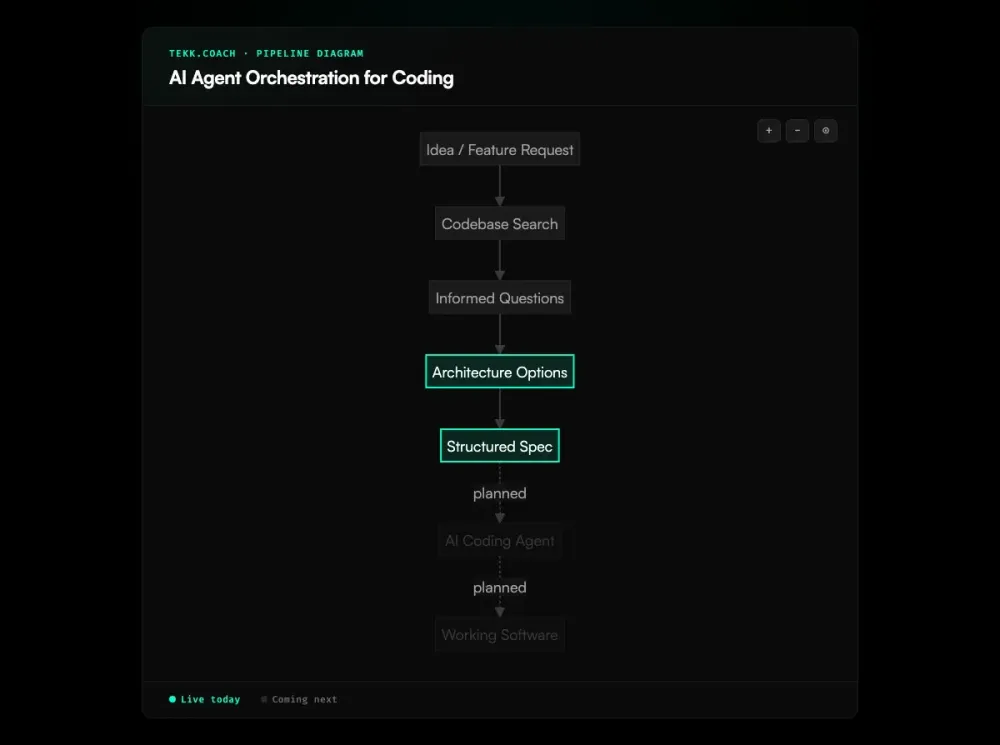

AI agent orchestration for coding means the agents work together, in the right order, with the right context, against a spec that was generated from your actual codebase. Tekk.coach is that orchestration layer — built specifically for the coding workflow, not retrofitted from a general-purpose agent framework.

[Try Tekk.coach Free →]

Key Benefits

The spec that makes agents effective. Your agent is only as good as its inputs. Tekk generates inputs that are dramatically better than a paragraph — codebase-grounded, acceptance-criteria-complete, file-referenced specs that your coding agents can actually execute against without flailing. This is spec driven development applied at the orchestration layer — structured enough for parallel execution, precise enough to prevent rework.

Parallel waves, not sequential queues. Independent subtasks run simultaneously. Dependent subtasks sequence correctly. The right architecture prevents the cascade failures that come when agents edit related files without coordination.

No more manual routing. You stop deciding which agent gets which task. Tekk matches subtask requirements to agent capabilities — execution tasks to Cursor, design-first work to Gemini, MCP-capable tasks to Claude Code. (Coming next.)

BYO agents. Keep your subscriptions. Cursor, Codex, Claude Code, Gemini — connected via OAuth. You're not replacing your agents. You're coordinating them without building coordination infrastructure yourself.

One PR for human review. All agent work merges to a single shared feature branch. You review once. One clean diff, not five separate PRs from five separate agent sessions.

How It Works

Step 1: Connect your codebase. Link GitHub, GitLab, or Bitbucket. Tekk's semantic search indexes your repository so every planning question and every spec subtask references actual code — not generic patterns.

Step 2: Describe the feature. Plain language. "Add Stripe subscription billing." "Build a semantic search endpoint." "Refactor the auth layer to support multi-tenancy." The agent handles the rest.

Step 3: Answer informed questions. The agent asks 3-6 questions grounded in what it found in your code. Not templates. Questions that surface actual architectural constraints and trade-off points in your specific system.

Step 4: Review and edit the spec. Complete spec streams into the BlockNote editor in real-time. TL;DR, Building/Not Building scope, subtasks with acceptance criteria and file references, dependency ordering, risk-flagged assumptions, validation scenarios. Edit anything before execution.

Step 5: Execute with your agents. (Coming next.) Click Execute. Tekk decomposes approved subtasks by dependency, groups independent subtasks into parallel execution waves, and dispatches each to the right agent via OAuth. All jobs push to one shared feature branch. One PR for your review.

Who This Is For

Developers running multiple agents and manually routing work between them. If you're copy-pasting specs between chat windows, deciding which agent to use for each subtask, and managing merge conflicts from simultaneous agent sessions — Tekk removes all of that.

Founders and small teams without dedicated engineering coordination. You can't afford to spend half your day being the coordinator between your AI agents. Tekk is the coordination layer you don't have to build. Structured ai project planning before dispatch is what separates teams that ship from teams that rework.

Engineers who've been burned by vague prompts. You know the failure mode: vague task, plausible-sounding code, wrong architecture, rework. LLMs force solutions from whatever context they're given rather than surfacing what they don't know. Tekk's codebase-first planning workflow produces specs that prevent this at the source.

Not for senior architects who already know exactly how to spec every feature and just need an execution agent. Not for enterprise teams that need Jira-style workflow governance. Tekk is for builders who want to move fast with high precision and zero ceremony.

What Is AI Agent Orchestration for Coding?

AI agent orchestration for coding is the coordination of multiple AI coding agents — Cursor, Codex, Claude Code, Gemini — to execute a software feature with dependency-ordered parallelism. The Anthropic 2026 Agentic Coding Trends Report documents how these agents have become the primary execution layer for developers — creating the coordination problem that orchestration tools exist to solve. The orchestration layer sits above the coding agents and handles: task decomposition from a structured spec, dependency graph analysis, agent routing by capability, parallel wave execution, and branch/PR management.

The critical distinction from general AI orchestration is that coding requires a planning phase before execution. The quality of code produced by any agent is bounded by the quality of the spec it receives. VentureBeat's analysis of production AI coding agents found that brittle context windows are the core failure mode — orchestrating bad inputs faster doesn't help. You need the planning layer to generate good inputs first.

This two-layer architecture — planning layer (Tekk) + execution layer (Cursor, Codex, Claude Code) — is the correct model. Spec-driven development has emerged as the defining engineering practice for AI-assisted teams. It mirrors how effective engineering teams work: you spec before you build, you sequence before you parallelize. For teams ready to move beyond single-agent workflows, full ai agent orchestration is the coordination layer that routes those specs to the right agents in the right order.

Frequently Asked Questions

How do you orchestrate Cursor, Codex, and Claude Code together?

The correct approach is a two-layer architecture: a planning layer that generates codebase-grounded specs with dependency-ordered subtasks, and an execution layer that dispatches those subtasks to the right agents. Tekk.coach provides the planning layer (live today) and the dispatch layer (coming next). Each coding agent connects via OAuth — the same pattern as GitHub authentication. You keep your existing agent subscriptions.

What is wave-based parallel execution for coding agents?

Wave-based execution groups subtasks by dependency tier. Wave 1 = all subtasks with no dependencies, executed simultaneously by multiple agents. Wave 2 = subtasks that depend on Wave 1 results. Wave 3 depends on Wave 2. This approach maximizes parallel throughput while guaranteeing execution order. For a typical feature: database schema runs in Wave 1, service layer in Wave 2, API layer in Wave 3, frontend in Wave 4. All waves push to a single branch for one clean PR.

Why does AI agent orchestration for coding require a planning layer?

Every coding agent is bounded by its inputs. Agent scaffolding quality matters as much as model quality — the planning layer is where that scaffolding is built. Cursor, Codex, and Claude Code are all execution-layer tools — they're excellent at writing code given clear, precise instructions. They struggle with vague prompts, missing context, or underspecified acceptance criteria. The planning layer generates those precise instructions by reading your actual codebase before speccing anything. Orchestration without planning is execution without direction.

Can I use Tekk.coach with just one coding agent?

Yes. The planning workflow — codebase reading, informed questions, spec generation — is useful regardless of how many agents you dispatch to. Many teams start with Tekk's planning layer using a single agent and adopt multi-agent dispatch as they scale. The spec format is the same either way.

How does Tekk.coach handle merge conflicts between agents?

Tekk's dependency ordering and wave-based execution are designed to prevent conflicts at the structural level: independent subtasks that could conflict are serialized into separate dependency waves, not run simultaneously. All agent work pushes to a single shared feature branch. Where conflicts remain possible (e.g., agents editing the same file in the same wave), the current approach requires human review before merge.

Is the multi-agent dispatch feature live today?

The planning workflow is live: connect your repo, describe the feature, work through informed questions, review and edit the generated spec. The kanban board, review mode, and web research during planning are also live. Multi-agent execution dispatch — routing subtasks to Cursor, Codex, Claude Code, and Gemini in dependency-ordered parallel waves — is coming next.

What coding agents does Tekk.coach support?

Cursor (primary code execution via Cloud Agents API, webhook-driven), Codex (OpenAI's cloud coding agent), Claude Code (extends Tekk's agent SDK with write-capable MCP tools), and Gemini (design-first tasks, then hands off to Cursor or Codex). All connect via OAuth. Agent support is coming next alongside the execution dispatch feature.

Ready to Try Tekk.coach?

Stop being the coordinator between your AI agents. Connect your repo, describe the feature, and get a codebase-grounded spec that your coding agents can actually execute against — in minutes.

[Start Planning Free →]